What is Kubernetes?

Kubernetes (also known as k8s) is an open-source container-orchestration system created by Google in 2014 and now maintained by the Cloud Native Computer Foundation (CNCF) which is the association of Linux and Google. The word Kubernetes is greek and means “pilot”, “helmsman” which explain the helm on the logo!

By container-orchestration system, we mean a machine that will automatically deploy, manage and scale your applications.

Can you explain it differently?

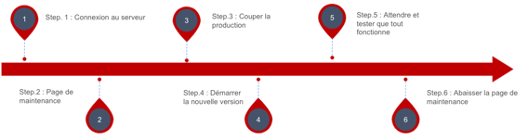

Ok, let’s take an example. Let say I own a website (aka an application), the version of this website currently in production is 1.0.0 and I would like to deploy version 1.1.0. In a classical infrastructure I would:

- Connect to the server

- Raise the maintenance page (the website is now inaccessible to the users)

- Shutdown the 1.0.0 version of the application

- Pull the code and start the new version.

- Wait and test that the new version is users ready

- Lower the maintenance page, now the new version of the website is available for the users.

Now if there is a single problem for example the website crash, we have to do the same manip to deploy again the last stable version of the application (1.0.0). This technique creates a lot of downtimes and so creates unhappy users.

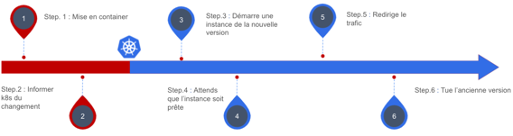

Now, let’s see how Kubernetes handles it:

- Containerisation of the 1.1.0 version of the code mean we put it in a container.

- We inform Kubernetes that there is a new version of the website that exists

- Sit back and watch!

Kubernetes will start a new pod containing the latest version of the website, this pod will not be accessible to the users until K8s considers it healthy and ready. During this process, the users still have access to the old version of the website.

When k8s considers the new version ready, it will redirect little by little the traffic from the old version to the newest. If there is any problem during this period, Kubernetes will redirect the traffic back to the old version of the code and restart the 1.1.0 version.

Using this technique we almost eliminate downtimes! And yes that also means no maintenance page!

Ok, but k8s run on a server, right?

Not exactly, it’s not a server but a cluster, which is multiple computers called nodes. K8s will manage these nodes using what we call the control plane, the control plane is going to distribute the pods (all your applications) between each node to avoid consuming too much CPU on the same node. Correctly configured Kubernetes will be able to ask for a new node if necessary.

The advantages of Kubernetes

Security

A cluster is divided into namespaces, every object in Kubernetes (pod to deploy, config map, etc.) are in a namespace.

By default, a pod (so an instance of an application) is isolated into its namespace and so can’t be accessed from outside the namespace or the cluster. You have plain control over what is accessible and what isn’t.

When I say, you are in plain control inside the cluster, you are! You can create what we call network policy to define which pod can interact with who and how. You can also, authorize specific request from specific senders (that can be a namespace, a pod, or even from the internet) to enter or exit the cluster.

Load management

An only instance of an application is often insufficient to handle a lot of users, especially during peak hours, Kubernetes can help with that!! By monitoring the CPU usage, Kubernetes can scale up or down the instances number of your application meaning if a pod consume more than X% of the CPU allowed to it, K8s will start another instance, a new pod, to absorb the load.

If the load is HTTP requests, Kubernetes offers different load balancing algorithms to distribute the load between all the pods.

Configuration management

Each application needs configuration to run properly. K8s offers two types of objects to handle the configuration: configmaps and secrets.

Each type can be injected as a file or environment variable directly inside a pod, making it easy to manage your different environment (dev, QA, production, etc.).

So if you need to change a specific configuration or a secret token, update your config map or secret and restart the pod!

Routing

Each pod is isolated from outside its namespace and so isn’t accessible from outside the cluster. So if you want to call a pod you have two options, you can directly call it by its IP address which is very complex because the IP isn’t static and it’s only doable from inside the cluster. The second option is to use a service, which has its intern IP address and an internal URL (manage by the DNS inside the control plane). After that, you can configure the service to be accessible from outside the cluster. And the best? A service can redirect the traffic to multiple pods!

Open and highly configurable

K8s is open-source so if you want to understand the logic behind every object and its behaviour, go to the code and dig! Being open-source has another advantage: the community is always here to help or fix bugs.

The foundation of Kubernetes is its versatility, there is a multitude of external product you can run inside a cluster to help manage your application like Datadog, Istio, Calico, etc. and if you don’t find the answer to your problem inside Kubernetes or third-parties services you can create your own object!

And going further…

You can put your hands in K8s using the free course of Katacoda.

Glossary

Container: software unit (can also be called object) which contains source code and all the needed dependencies. Completely independent of the computer on which it’s running. Using a container makes it possible to always have the same behaviour of the code whatever the hardware. This is also much lighter than a virtual machine (VM) to run because there is no hardware emulation.

Downtime: a period when an application isn’t available to its users. This includes maintenance and crash but not only. This metric is directly linked to the availability of a service.

Pod: object (software unit) of Kubernetes which manage one or more container. A pod is one instance of the application.

Node: computer (virtual or not) that is part of a cluster.

Cluster: multiple nodes managed by Kubernetes control plane.

Namespace: space inside a cluster when you can deploy pods, services, etc. The purpose of a namespace is to divide your cluster into an understandable space (for example one namespace by environment: dev, QA, pre-production, production).

Load-balancer: object (software unit) of Kubernetes which distribute the incoming traffic to multiple pods using a round-robin by default. A load-balancer is a type of service.

Service: object (software unit) of Kubernetes which posses an URL so you can communicate with a set of pods.